AI Call Audits: Redefining Quality Assurance

The AI Zero Tolerance Policy (ZTP) Call Audit initiative began with a leadership-level realization: compliance failures in customer service environments are rarely caused by lack of effort. They are caused by systems that cannot see risk at scale.

In high-volume call centers, zero-tolerance policies around abusive language, regulatory breaches, and brand conduct exist on paper, but enforcement relies heavily on manual audits and sampling. This creates blind spots where violations go undetected, feedback arrives too late, and leadership is forced to manage compliance reactively rather than proactively.

The goal of this project was not simply to automate call audits. It was to rethink how compliance operates as a system.

From Detection to Decision Support

The AI ZTP system was designed as a continuous risk visibility layer, capable of analyzing every customer interaction in real time. By leveraging AI-driven language understanding and severity-based scoring, the system identifies potential violations as they occur and translates them into signals that QA teams and leaders can act on immediately.

Designing for Trust, Adoption, and Scale

A critical leadership challenge in this project was ensuring that advanced AI capabilities remained usable and trustworthy for non-technical users. QA analysts and managers needed to understand not just what the system flagged, but why it did so, and how to respond with confidence.

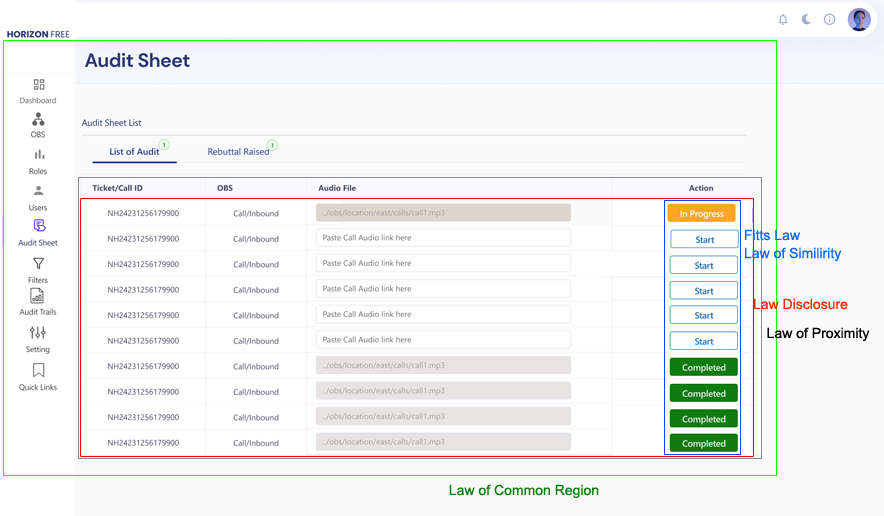

The experience was intentionally designed to integrate into existing call center workflows, minimizing disruption while increasing visibility. Interfaces prioritized clarity over complexity, enabling users to review flagged calls, analyze trends, and generate reports without needing AI expertise.

This focus on usability and explainability ensured that the system supported continuous improvement, not just compliance enforcement. Over time, insights from the platform informed agent training, coaching strategies, and operational changes that improved service quality across the organization.

Leadership Outcome

At its core, the AI ZTP Call Audit project represents a shift from reactive monitoring to proactive governance. By treating compliance as an organizational control system rather than a manual task, the platform enabled leadership to reduce risk, improve accountability, and elevate the role of UX from interface design to enterprise-level impact.

This project reinforced a key design leadership principle:

when systems are designed to surface the right signals, better decisions follow.

Fusion BPO Service

The AI Zero Tolerance Policy Call Audit initiative was executed over a four-week window, moving from strategic discovery to beta release under tight operational and risk constraints.

Rather than treating the timeline as a linear design process, the work was structured around early alignment, rapid validation, and controlled rollout to minimize disruption in a live, high-volume call center environment.

Stakeholder Alignment

Established a shared definition of success across QA leadership, operations, and AI engineering. This included aligning on risk thresholds, adoption metrics, and business outcomes to ensure design decisions directly supported compliance and ROI goals.User & Risk Research

Conducted targeted research with QA analysts, managers, and agents to surface failure points in existing audit workflows. Insights were translated into clear design priorities focused on trust, clarity, and actionability rather than feature expansion.Validation Before Scale

Prototypes were used as decision tools to validate assumptions early, reducing downstream rework. Usability testing focused on decision confidence, error recovery, and time-to-action, not just task completion.Controlled AI Integration & Beta Launch

Worked closely with AI and data science teams to integrate real-time detection and scoring while managing false positives and user trust. A staged beta release allowed leadership to assess impact before full rollout.Adoption & Enablement

The launch phase emphasized user onboarding, training, and post-launch feedback loops to ensure the system delivered sustained value rather than short-term compliance gains.Business Risk:

Technical & Organizional Risk

Contextual AI complexity

Training AI to understand nuanced language, intent, and severity across thousands of daily calls required significant upfront investment and careful validation to avoid false confidence.Adoption as a Make-or-Break Factor

QA teams and managers were non-technical users. If the system was not immediately understandable and trustworthy, adoption would fail regardless of AI accuracy. Designing for clarity and explainability increased upfront costs but was necessary to protect long-term ROI.Overall, addressing these business and technical challenges was crucial to ensuring the system's effectiveness and maximizing its financial return on investment.

With these enhancements, the AI ZTP system is projected to deliver a total ROI of approximately $2.25 million annually, making it a highly impactful investment that leverages cutting-edge AI technology to drive significant business value.

3. Task Analysis and Workflow Mapping

5. Persona and Scenario Development

6. AI - Specific Research techniques

Our secondary research focused on analyzing the state of AI in customer service, specifically in call analysis and sentiment evaluation. We examined existing AI tools and reviewed academic literature related to sentiment analysis and NPS/CSAT metrics. Key findings include:

1.

AI Tools in Zero Tolerance Policy Enforcement:

A study found that 63% of organizations have integrated AI tools like Assembly.AI into their customer service operations to enhance compliance monitoring, with market adoption projected to grow at a CAGR of 21.3% from 2022 to 2030 .

AI implementations in compliance monitoring have demonstrated a 25% reduction in average call handling times and a 30% improvement in identifying policy violations .

2. Assembly.AI and Call Analysis:

Assembly.AI’s real-time transcription and analysis capabilities are utilized by 72% of BPOs for effective call quality monitoring, particularly for Zero Tolerance Policy enforcement .

Companies employing Assembly.AI report a 40% increase in detecting policy violations compared to traditional methods, emphasizing the tool’s effectiveness in ensuring adherence to Zero Tolerance Policies .

3. Sentiment Analysis and NPS/CSAT Metrics:

Research indicates that context-aware sentiment analysis, as offered by Assembly.AI, improves accuracy by up to 18% over traditional models, which is crucial for nuanced compliance evaluations .

AI tools like Assembly.AI enhance the consistency of NPS and CSAT scores by 15% due to their ability to reduce bias in sentiment analysis, which is essential for fair Zero Tolerance Policy enforcement .

4. Impact on Zero Tolerance Policy Evaluations:

The integration of Assembly.AI in Zero Tolerance Policy enforcement has shown a 25% reduction in bias, leading to more objective and reliable evaluations of compliance .

With Assembly.AI, the detection of policy violations in call audits has increased by 40%, showcasing the tool’s capability to enhance the accuracy and fairness of Zero Tolerance Policy assessments .

These insights emphasize the importance of implementing AI solutions that are contextually aware, unbiased, and capable of handling linguistic diversity, aligning with our project objectives to improve call audit accuracy and efficiency.

Research Objective

The primary research focused on understanding how customer service quality and compliance are currently governed, where zero-tolerance policy enforcement breaks down, and how AI-driven auditing could improve consistency, speed, and trust across teams.

Participants

The study involved cross-functional stakeholders directly responsible for call quality and compliance, including:

Participants represented high-volume, policy-sensitive support environments, where zero-tolerance violations carry regulatory and reputational risk.

Research Method

This approach allowed the research to surface both procedural gaps and behavioral dynamics, not just stated opinions.

Key Research Themes Explored

1. Compliance Risk & Policy Interpretation

How zero-tolerance policies are interpreted in practice, where auditor judgment varies, and how context changes violation severity.

2. QA & Audit Workflow Reality

How policy breaches are identified and reviewed today, and where manual audits create delays, coverage gaps, and inconsistency.

3. Structural Limits of Manual Auditing

Where subjectivity, delayed feedback, and inconsistent escalation undermine reliability and scale.

4. Agent Feedback & Behavioral Impact

How timing, clarity, and mode of feedback influence agent behavior and compliance learning.

5. Trust Expectations for AI-Assisted Auditing

What QA teams and leaders require to trust AI outputs, including accuracy, explainability, and handling of edge cases.

6. Contextual Intelligence in Decision-Making

How conversation flow, customer emotion, and agent intent affect violation classification and confidence in outcomes.

7. Adoption & Change Readiness

Organizational concerns around privacy, workflow integration, transparency, and training.

8. Success Metrics That Matter to Leadership

How effectiveness is measured through audit speed, consistency, QA efficiency, agent behavior change, and trust in metrics.

| DASHBOARD | OBS | ROLES | USERS | AUDIT SHEET | FILTERS | AUDIT TRAILS | SETTING | QUICKLINKS |

|---|---|---|---|---|---|---|---|---|

| create | Create | Create | Create | Create | View | Manual | ||

| view | View | View | View | View | AI |

User Scenarios

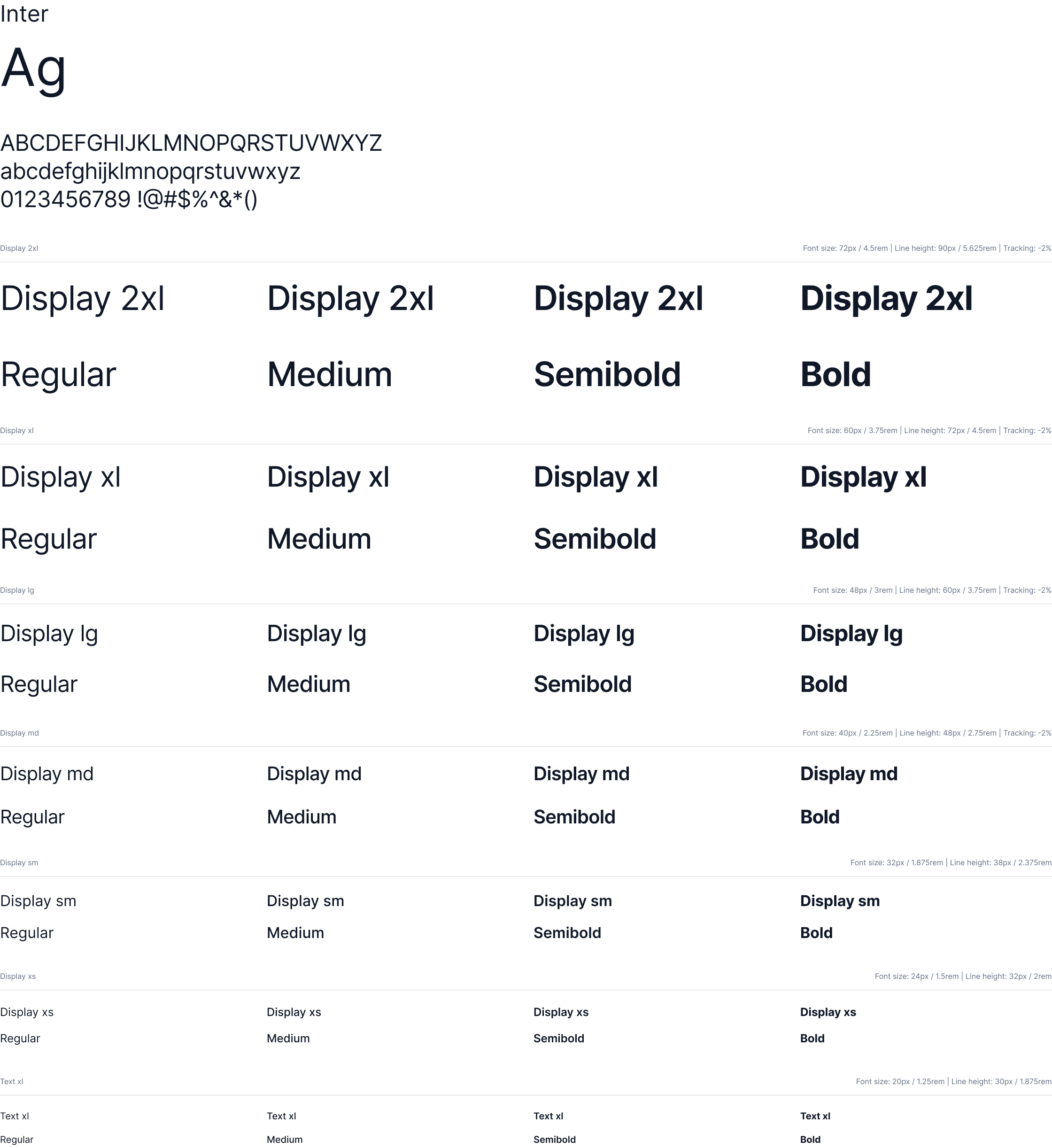

Design System

What Did We Achieve from This Project?

What Did We Learn from This Project?

UX Success KPI

SUS Score Calculation

| Respondent | SUS Score |

|---|---|

| R1 | 52.5 |

| R2 | 82.5 |

| R3 | 52.5 |

| R4 | 87.5 |

| R5 | 47.5 |

| R6 | 85.0 |

| R7 | 57.5 |

| R8 | 87.5 |

| R9 | 52.5 |

| R10 | 87.5 |

The average SUS score across all respondents is: 69.25

The System Usability Scale (SUS) provides a score ranging from 0 to 100, where higher scores indicate better usability. Here's how to interpret the scores:

With an overall score of 69.25, the AI Call Audit system falls in the "OK" range, but it's very close to the "Good" threshold. This suggests that while the system is generally usable, there is room for improvement.

Strengths:

Q7 (Quick learnability) consistently received high scores, indicating that users find the system easy to learn.

Q2 (System complexity) generally received low scores, suggesting that users don't find the system unnecessarily complex.

Areas for Improvement:

Q4 (Need for technical support) received mixed responses, indicating that some users might need additional support.

Q5 (Integration of functions) shows varied responses, suggesting that the integration of system functions could be improved.

Inconsistencies:

There's a notable disparity in scores between respondents. Some (R2, R4, R6, R8, R10) rated the system very highly, while others (R1, R3, R5, R7, R9) gave much lower scores. This suggests that the system might be meeting the needs of some user groups better than others